CV and Portfolio

Biography

I’m Thomas Roughton, a programmer, dabbling designer, hobbyist musician and photographer, and musical theatre enthusiast. I’m based in Wellington, New Zealand, and you can hire me for game, graphics, or engine work. I can work on-site in Wellington or remotely, and can adapt to a wide range of development practices and tech stacks.

I specialise in computer graphics and real-time rendering, where I’ve contributed techniques such as progressive least squares encoding. I have expert experience with the Metal rendering API, have experience with OpenGL, and have also written a Vulkan backend for my render-graph based rendering framework.

I am highly motivated, have high standards, and learn quickly. I’m constantly exploring possibilities, finding ways that things could be done better, and acting upon them. I strongly value the end user experience in my work, whether that be a consumer, artist, or API user, and am excited more by what technology enables people to do than by the technology itself.

My background in programming began with writing iOS apps in Objective-C at the age of 13. Since 2015, I’ve been writing most of my code in the Swift programming language, and have contributed to the compiler. I also have significant experience with C, C++, Java, C#, and Python. The promise of languages like Swift and Rust excites me, and I’m looking forward to seeing the industry move away from C and C++.

For the past few years, I’ve worked in conjunction with Joseph Bennett to develop a cross-platform game engine in Swift (components of which can be found on my GitHub). This has been a great opportunity to learn about a wide range of topics, including engine architecture, data-oriented design, different graphics APIs, various rendering techniques, allocator management, SIMD programming, and more.

Published Works

Also available in more detail here.

- ZH3: Quadratic Zonal Harmonics (2024).

- Progressive Least-Squares Encoding for Linear Bases (2019).

- Interactive Generation of Path-Traced Lightmaps (Master’s Thesis, 2019).

Qualifications

- Master of Science in Computer Graphics with Distinction (Victoria University of Wellington, 2019): Interactive Generation of Path-Traced Lightmaps

- Bachelor of Science, majoring in Computer Science and minoring in Media Design (A+ average) (Victoria University of Wellington, 2016).

- NZQA Outstanding Scholarships in Calculus and Classics, and Scholarships in Physics, Chemistry, and Technology.

- NCEA Level 3 with Excellence.

Work Experience

Software Development

-

Senior Research Engineer (on contract) at Activision’s Central Technology Group (May 2023 – Present), working on the lighting pipeline for the Call of Duty series of games.

-

Lead Graphics Engineer (on contract) for LiveSurface (March 2020 – Present), building the renderer and content workflows for their new Mac application.

-

Graphics/Engine Development for Confetti FX (September 2019 – March 2020), working primarily on The Forge. My contributions included developing the new file system APIs, implementing a DXR-like path tracing backend for Metal, and implementing demos for VR interaction and rendering techniques.

-

Internship at Weta Digital (November 2017 – February 2018), working on skin rendering. I researched and implemented a novel solution for thick- and thin-surface subsurface scattering within their in-house OpenGL preview renderer Gazebo, meeting the constraints of their production pipeline.

-

Full-time summer work at TouchTech (January – February 2015) as an iOS engineer. I extended the iPad version and led development on the iPhone version of the UBank app, and was brought on to the Ngā Tapuwae Gallipoli app to address bugs and UI issues. Despite my short time in this role, I quickly became considered an expert in the team and was referred and solved many difficult problems that the team had been struggling with.

-

Sole developer of CubeTimer (2011 – 2012), an iOS speedcubing application. This started as a hobby when some friends wanted to time how quickly they could solve Rubik’s cubes but quickly expanded into a fully-featured application.

I also contribute to the open-source Swift programming language and actively develop my own graphics framework called SwiftFrameGraph. You can find some of my code on GitHub, in varying states of production-readyness.

Web Development

-

Orb Solutions (2018), adapting an external designer’s concepts into a Squarespace template.

-

Dragonfly Hair Design (2012), designing and building a clean HTML + CSS website to match their brand.

Portfolio

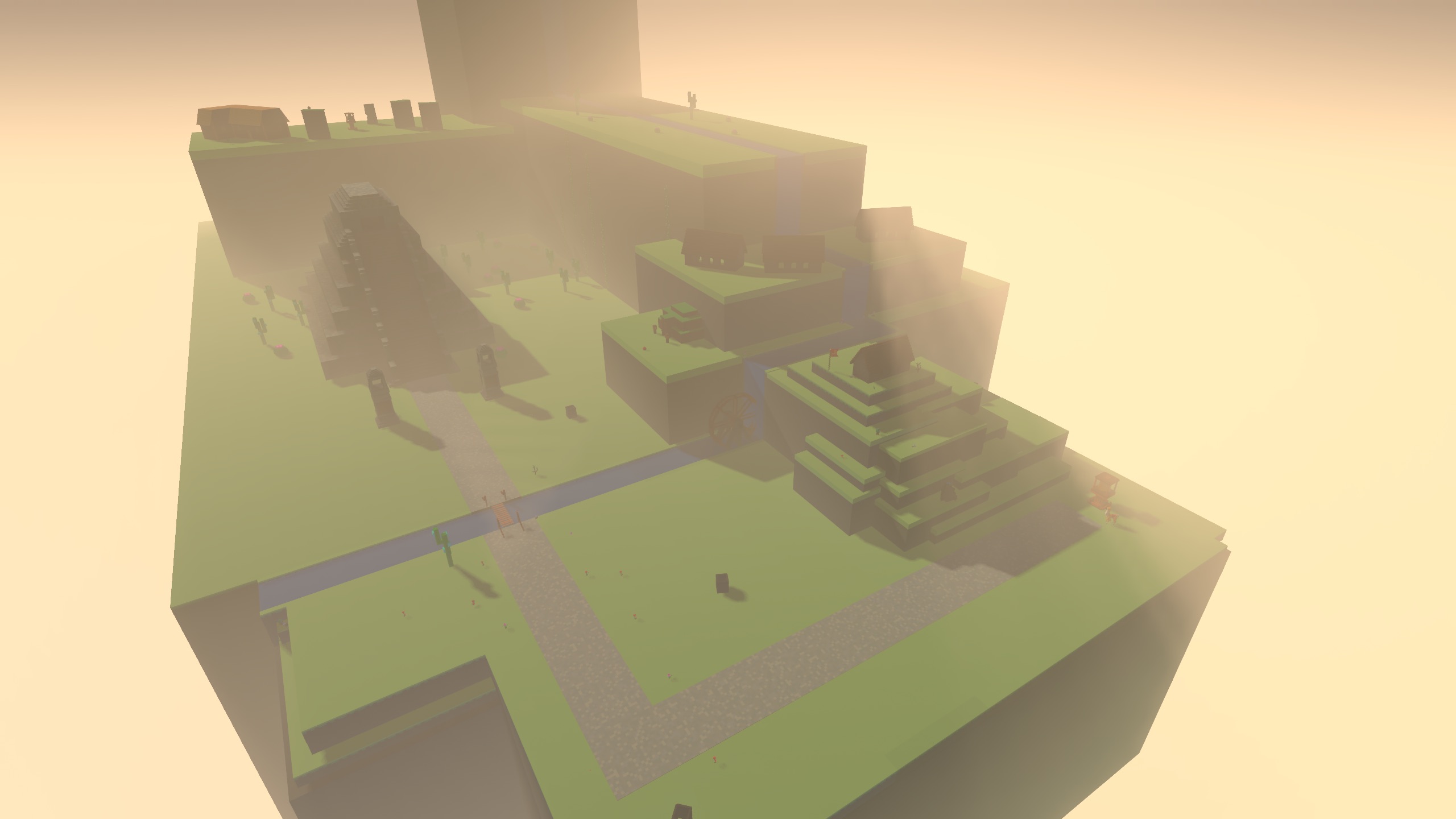

‘Interdimensional Llama’ (2016)

Interdimensional Llama was a perspective-switching puzzle game made in conjunction with Phoebe Zeller, Casey Garnock-Jones, Joseph Bennett, Jiaheng Wang, and Cameron Hopkinson. You play as Lou, a llama with the ability to switch between 2D and 3D to navigate obstacles in the world and get to the goal.

Along with helping to design the rules for the game, I worked on C# gameplay code within Unity, primarily contributing to the grid-based navigation system and camera management code.

‘Atmospheric Llama’ (2017)

As part of a university course, the task was to select and implement a modern graphics technique; I chose temporal antialiasing, and worked with Joseph Bennett, who implemented frustum-based volumetrics.

(I recommend watching the video at 1.5x - 2x speed.)

To add some extra challenge, we decided to implement this within our own engine, which was written more-or-less from the ground up for this project. This ended up including implementing an early version of SwiftFrameGraph, a layer-based animation system, local light probes, clustered shading, and BVH-based frustum culling, all in the space of twelve part-time weeks. There are many technical issues in the final result – directional shadows are particularly rough – but I’m still proud of what we managed to achieve.

‘Official Business’ (2017)

For this university project, the task was to composite synthetic objects into a real scene using only a modified version of PBRT (i.e. no Photoshop adjustments). I implemented differential rendering within PBRT, constructed proxy geometry to match the photographed scene, and tweaked materials.

The below image contains four synthetic objects. See if you can spot them!

Show Spoilers

Source image:

Proxy Geometry:

‘Illumination’ (2016)

Illumination is a semi-procedural realtime animation driven by a music track I created. The MIDI events for the music were exported from my DAW and imported into our custom-built 3D engine, which then used it to apply scripted animation and lighting changes to a scene imported from Maya. The animation makes use of physically-based rendering and LTC-based polygonal area lights.

Modelling and Animation

All of the projects below were done within the span of fourteen weeks as part of a part-time University course.

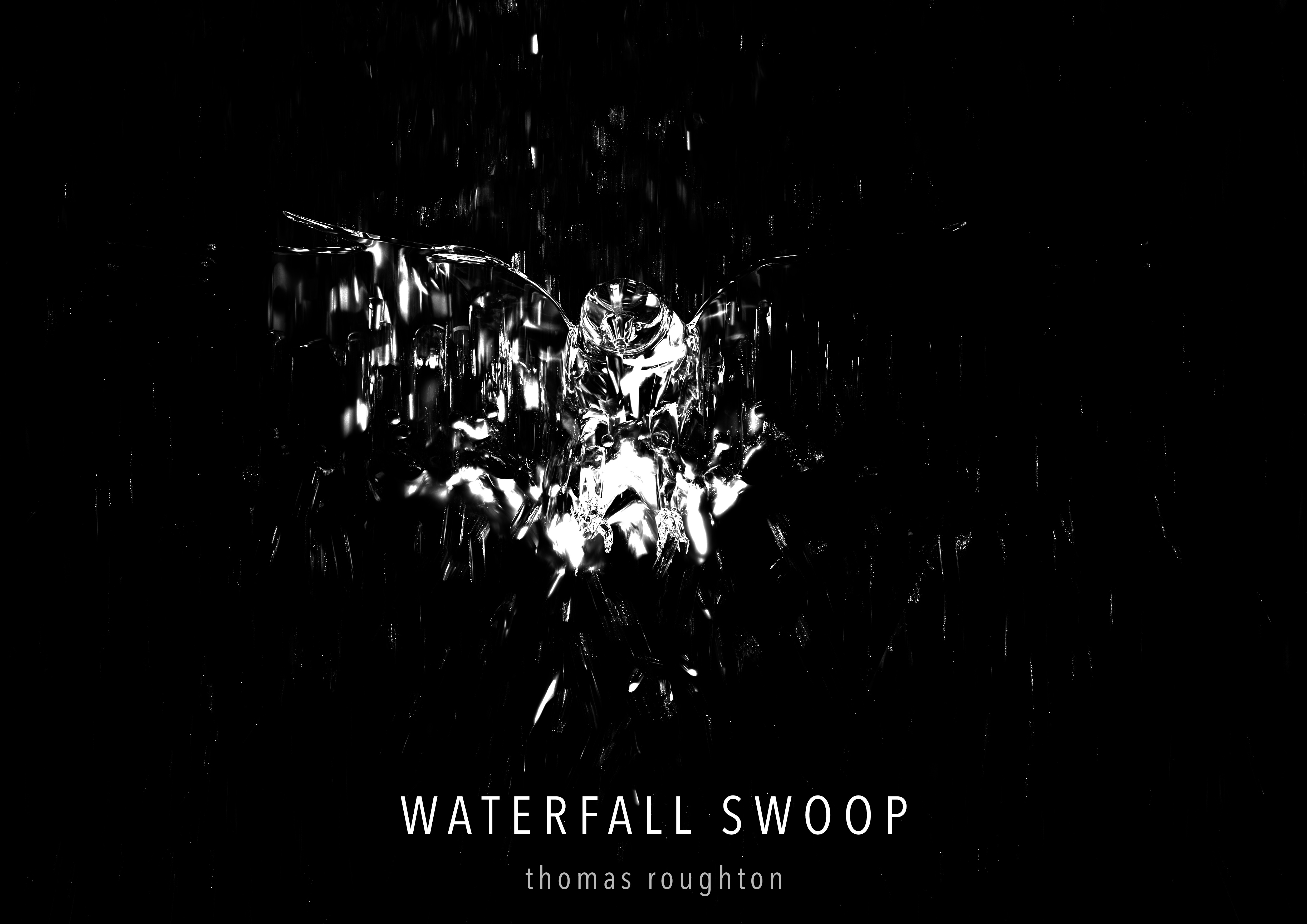

‘Waterfall Swoop’ (2015)

Waterfall Swoop was the first project in a modelling and animation course. I modelled the hawk, used Maya particles to generate a waterfall, and then animated the scene to achieve the motion blur in the final render.

‘March to Scurry’ (2015)

Our task for this project was to take a model someone else had made (in my case, an ant) and transform it into a different model; I chose a living computer mouse. I modelled everything apart from the ant in this scene and animated it all.

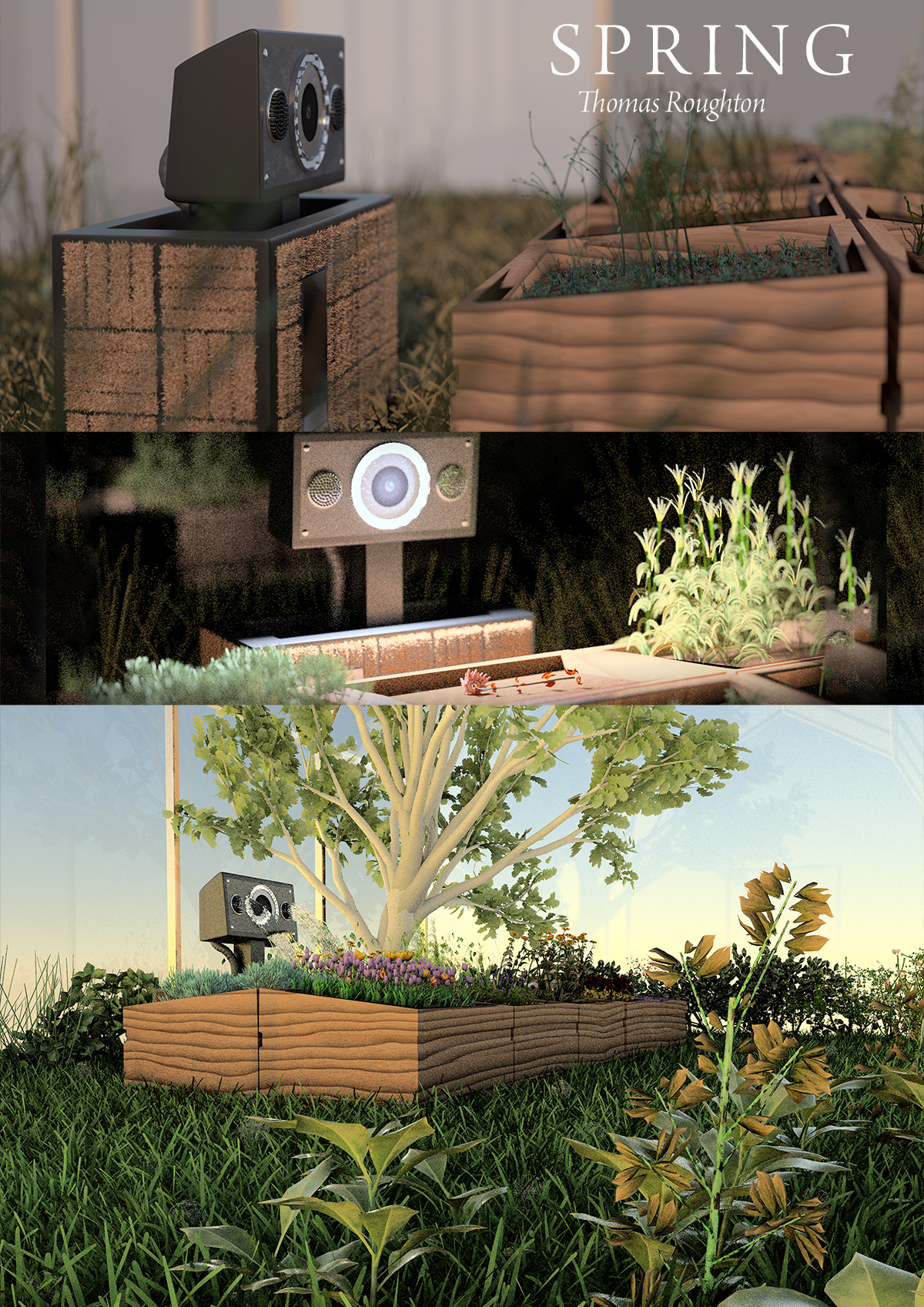

‘Spring’ (2015)

For this project, we had to design and animate our own short film. Spring is about a greenhouse robot trying to survive the winter.

Spring was an interesting challenge due to my unwittingly creating a very geometrically complex scene to render. The last few weeks of the project were an attempt to scale down the scene complexity to the point where I could meet the project deadline; as such, the rendering quality suffers in a few places and I wasn’t quite able to get the level of polish I wanted.